Next, you should call the get() function to retrieve the contents of the web page. The first step towards fetching a web page is establishing a connection to the resource. Jsoup uses the object to represent web pages. To work with the DOM, you should have a parsable document markup. Browsers send their 'unique' user agents, so you can just mimic the browser behavior and send User-Agent of MSIE, or FireFox (for example). Next, I will be showing you how to fetch content from a web page using Jsoup. If you do not set it explicitly it will be something like 'Java-1.6'. We should first import all the libraries that will be needed in the project. However, it’s possible to use the Jsoup library directly from the terminal of your operating system as you will see later in this article. Understanding web scraping What does web scraping refer to Many sites do not provide their data under public APIs, so web scrapers extract data directly from the browser. Step 3: Click “OK” to close the dialog box. The article will provide a step-by-step tutorial on creating a simple web scraper using Java to extract data from websites and then save it locally in CSV format. Select the Java Build path from the list given on the leftĬlick the “Add external JARS…” button then navigate to where you have stored the Jsoup jar file. Step 2: Do the following on the Properties dialog:

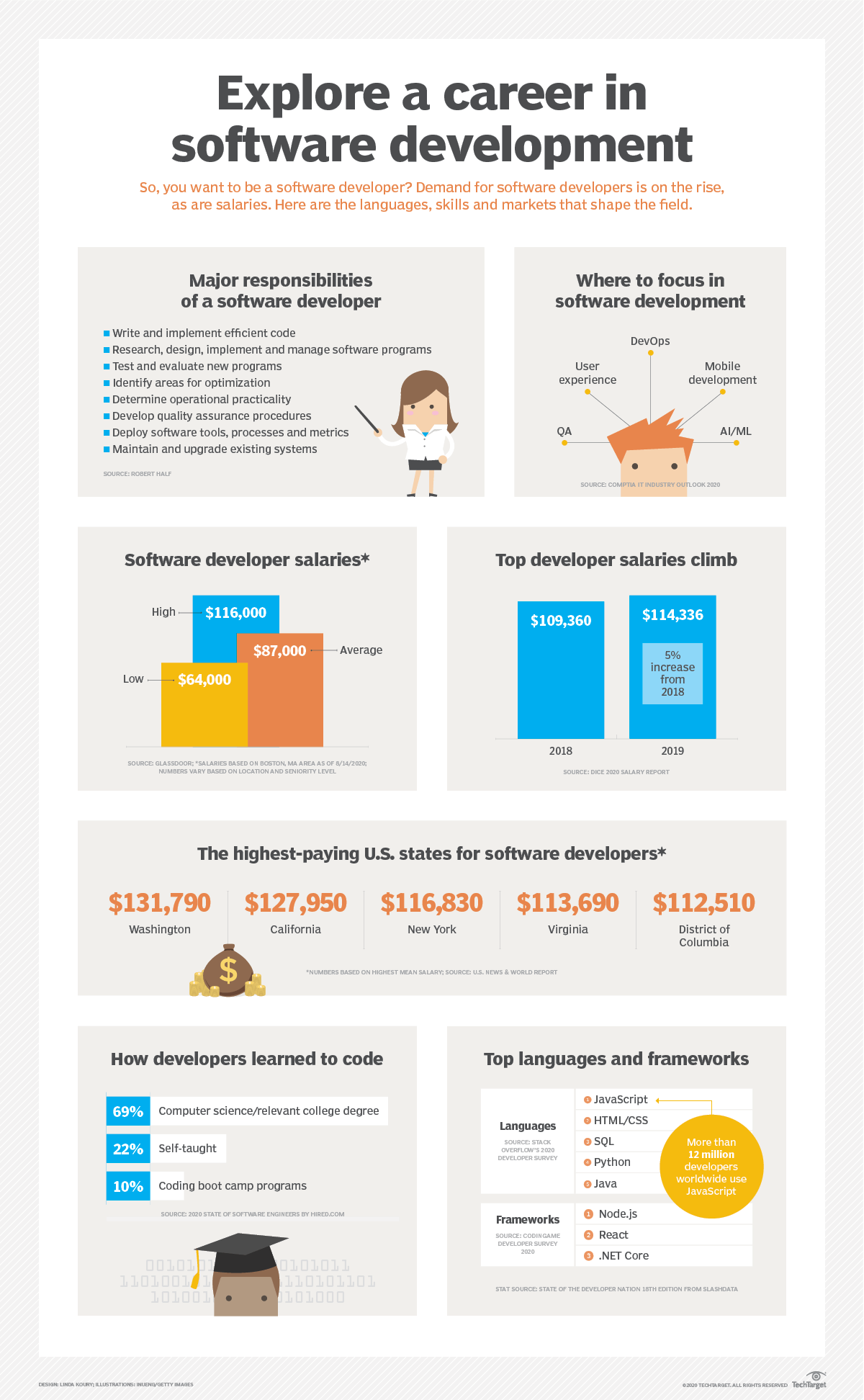

Step 1: Right click the project name on the Project Explorer and choose “Properties.” from the menu that pops up. In Eclipse, follow the steps given below: You need to download its jar file from Jsoup site and then reference it in your Java project. To use the Jsoup library, you MUST add it to your Java project. Manipulating HTML elements, text, and attributes Scraping and parsing HTML from a file, URL, or stringįinding and extracting data using CSS selectors or DOM traversal If you are good in jQuery, then working with Jsoup should be a walk in the park for you. Jsoup is open source and it was developed by Jonathan Hedley in 2009. Jsoup is a Java library that is made up of methods for extracting and manipulating HTML document content. In this article, I will be showing you how to scrape data from websites using Jsoup in Java and store the data in GridDB. Web scraping can speed up the data collection process and save you time. The work of the web scraper will be to scrape data about jobs from job listing websites of your choice and store it in a database such as GridDB. To make the process easier and save time, you can automate it by creating a web scraper using Jsoup. Searching for a job manually is boring and time-consuming. It means that you’ll have to invest a lot of time to look for the job. Suppose you’re looking for a job as a Java Programmer in Washington DC. However, I dont want the Reasearch Assistants to be able to change things on the original tables, since lot of the webscraper depends on the structure of the original tables. Then uploads to the database, it also checks for duplicates before uploading to the database. import java.io.BufferedInputStream import java.io.BufferedOutputStream import java.io.File import java.io.FileNotFoundException import java.io. Also note that it uses try-with-resources. The data is normally extracted from the HTML elements of the respective website. The webscraper scrapes from multiple websites everyday. The following code downloads an image from a direct link to the disk into the project directory. Web scraping is a technique used to extract data from website content. To access data from such sites, we use web scraping. However, there are websites that have not developed such APIs. Let's create a simple Java web scraper, which will get the title text from the site example.Most websites make their data available to users via APIs.

data parsing (pick only the required information).data extraction (retrieve required data from the website).To create a complete web scraper, you should consider covering a group of the following features: All those parts are essential, as not every website provides an API to access their data. In this article, we're going to explore different aspects of Java web scraping: retrieving data using HTTP/HTTPS call, parsing HTML data, and running a headless browser to render Javascript and avoid getting blocked. Let's check out the main concepts of web scraping with Java and review the most popular libraries to setup your data extraction flow. It allows creating highly-scalable and reliable services as well as multi-threaded data extraction solutions. Java is one of the most popular and high demanded programming languages nowadays.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed